Data is currently at

https://data.giss.nasa.gov/gistemp/tabledata_v4/GLB.Ts+dSST.csv

or

https://data.giss.nasa.gov/gistemp/tabledata_v4/GLB.Ts+dSST.txt

(or such updated location for this Gistemp v4 LOTI data)

January 2024 might show as 124 in hundredths of a degree C, this is +1.24C above the 1951-1980 base period. If it shows as 1.22 then it is in degrees i.e. 1.22C. Same logic/interpretation as this will be applied.

If the version or base period changes then I will consult with traders over what is best way for any such change to have least effect on betting positions or consider N/A if it is unclear what the sensible least effect resolution should be.

Numbers expected to be displayed to hundredth of a degree. The extra digit used here is to ensure understanding that +1.20C resolves to an exceed 1.195C option.

Resolves per first update seen by me or posted as long, as there is no reason to think data shown is in error. If there is reason to think there may be an error then resolution will be delayed at least 24 hours. Minor later update should not cause a need to re-resolve.

Any interest in ENSO prediction

SON -0.3

OND -0.4

NDJ -0.5

DJF -0.6

Will JFM be -0.5 or more negative?

https://origin.cpc.ncep.noaa.gov/products/analysis_monitoring/ensostuff/ONI_v5.php

If yes good odds available:

There are even forecasts around showing probabilities like

Season La Niña Neutral El Niño

JFM 95 5 0

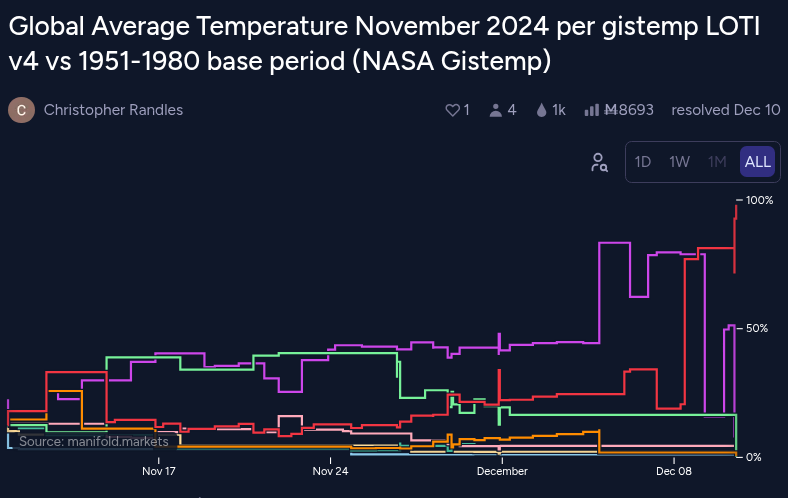

@LeonardoParaiso One is dated 4th other 3rd. So more time for updates/corrections/quality control fixes etc.

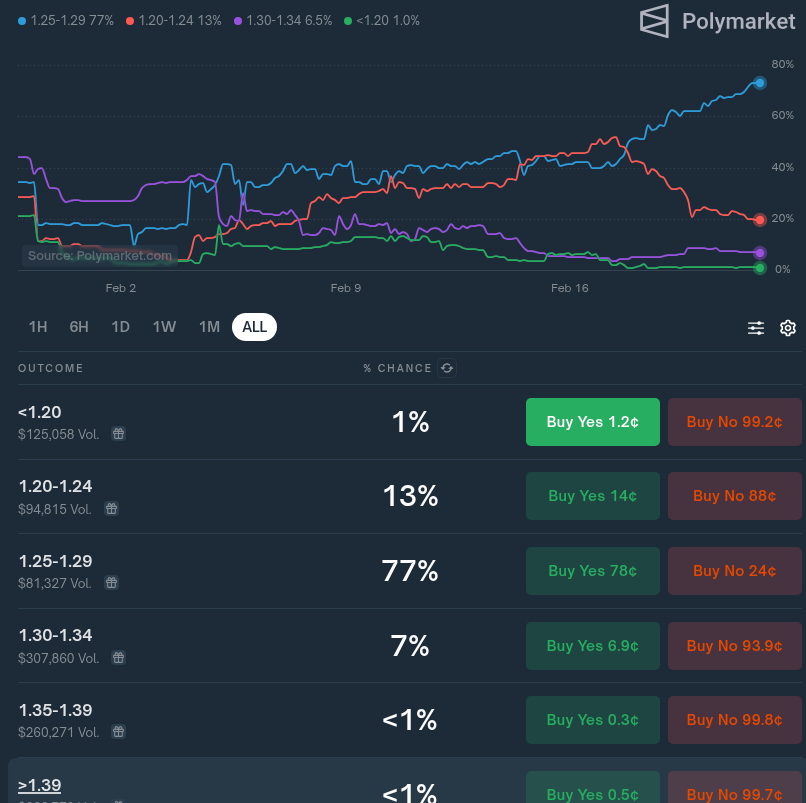

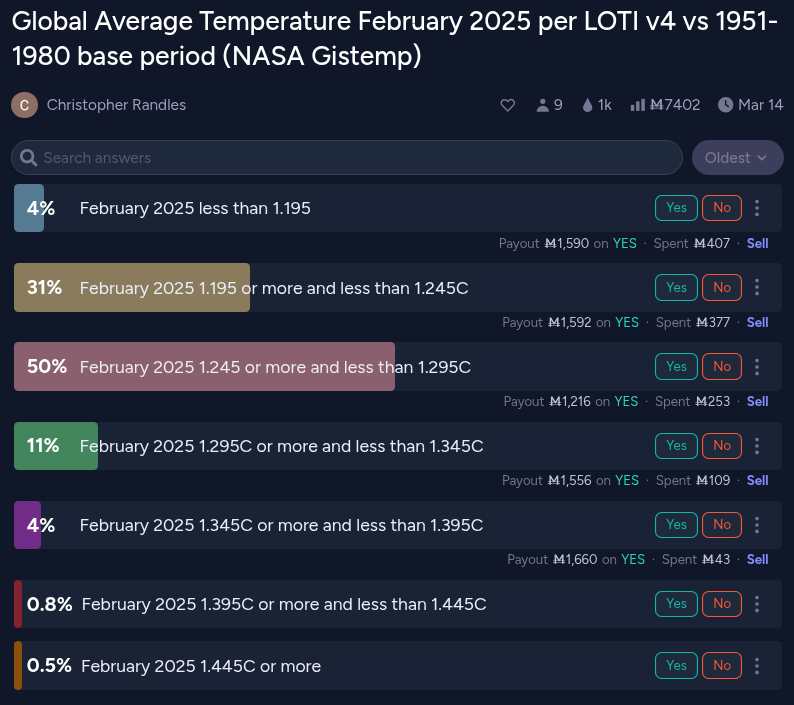

So one would suspect 4th should be more likely to be accurate than 3rd but of course there could be later adjustments the other way so maybe not that much in it. Also in the past we have seen updates and then it seems like they select an earlier dated run for completely unknown reasons. 4% 93% seems like traders are pretty sure which way it will go and while I am not confident that it shouldn't be closer odds I am not sure I want to bet on the lower '1.195 or more and less than 1.245C range' and not on the 'February 2025 1.245 or more and less than 1.295C' because it is likely other traders know better than me.

@ChristopherRandles Hm, i see. So on the 5th of every month is pretty much when markets get decided it seems. Good to know.

Not sure about that. I'm sure some of the other traders like aenews might know better, but for now I'm operating under the assumption that they usually do a Friday run during the week prior to the release date (the release date is next week); The ghcnm has been coming out late at night ~9-10pm EST so I expect the earliest you could know is late Thursday night.

Sometimes the runs may drift quite a bit before such a date (I've observed maybe up to by 0.03) but that also depends on when the ersst data comes out and so there is a larger drift by the last run is executed.

At the very least they give you a ballpark of which two bins are correct this early on and which are infeasible.

ghcnm.v4.0.1.20250306:

125.46

Still moving around a bit but my best guess is it will end up at 1.25 in the official release next week (as I guess they will do the run tomorrow during the normal work hours)

Selling my shares in the neighboring bins are worth <50 mana but I deem them not worth selling considering they are worth 8 times that on some very small probability chance they end up using a run from much later on (like Monday), not that I expect it to fall down below 124.5 again.

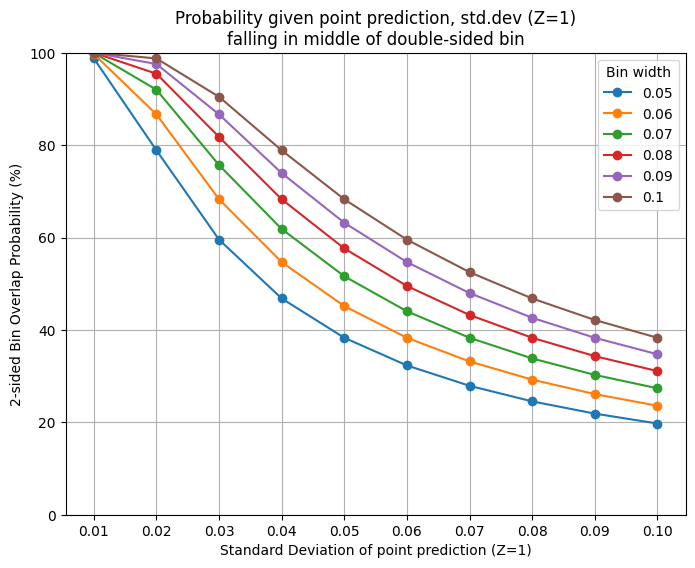

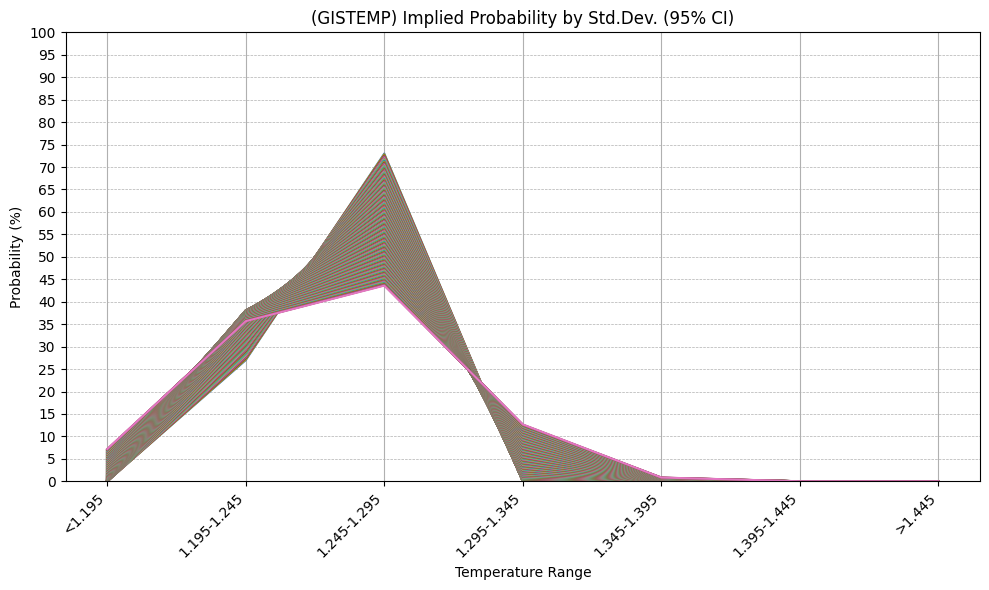

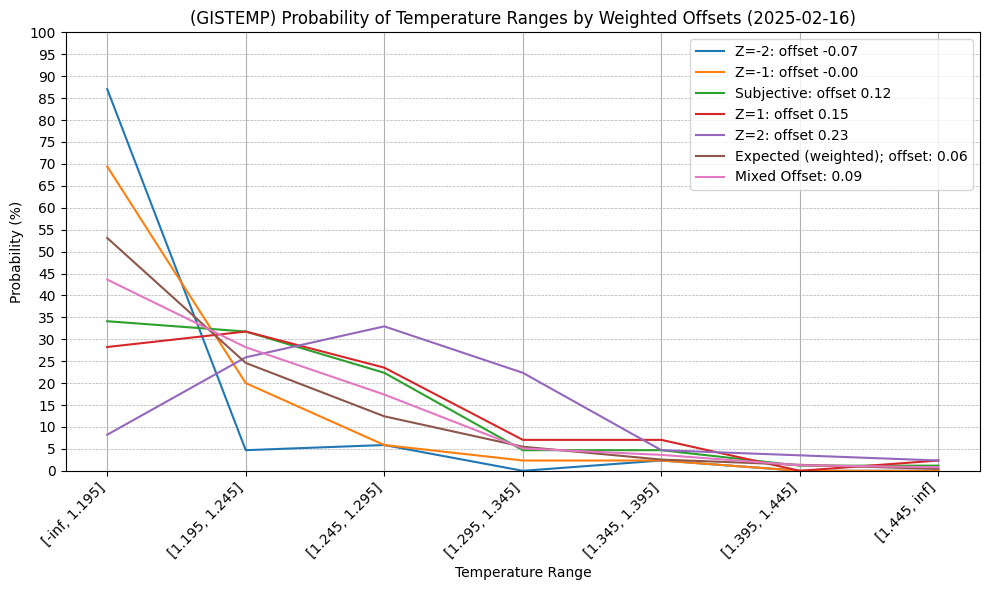

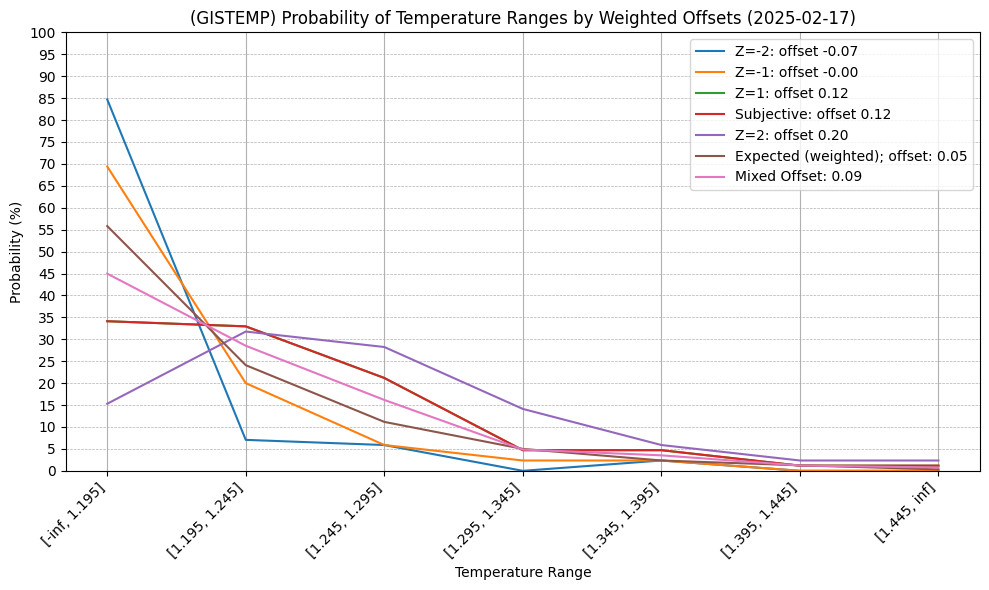

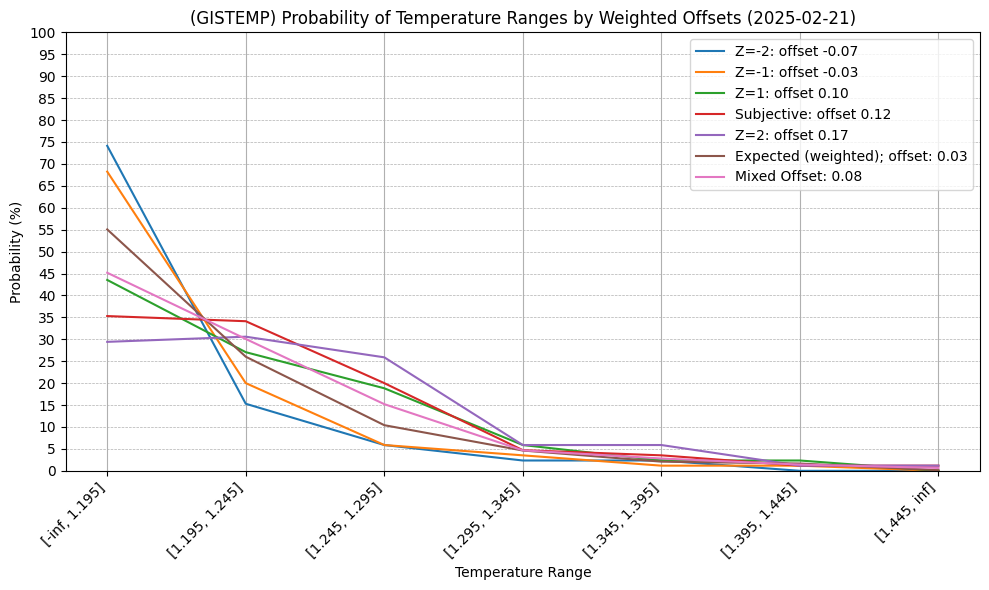

Ok after some thinking I realized it would be better to go the other way around and imply what the upper-bound std.dev (Z=1) is for a given point prediction for double sided bins (by assuming the point prediction made falls in the center of the bin). For example, since the polymarket dominant bin is 73% with a bin width of 0.05 (blue line) it implies a std. dev < 0.025 for their point prediction.

For this market the dominant bin is presently a one-sided bin so this is less relevant now, but will be for the future. I hope this is useful to bettors.

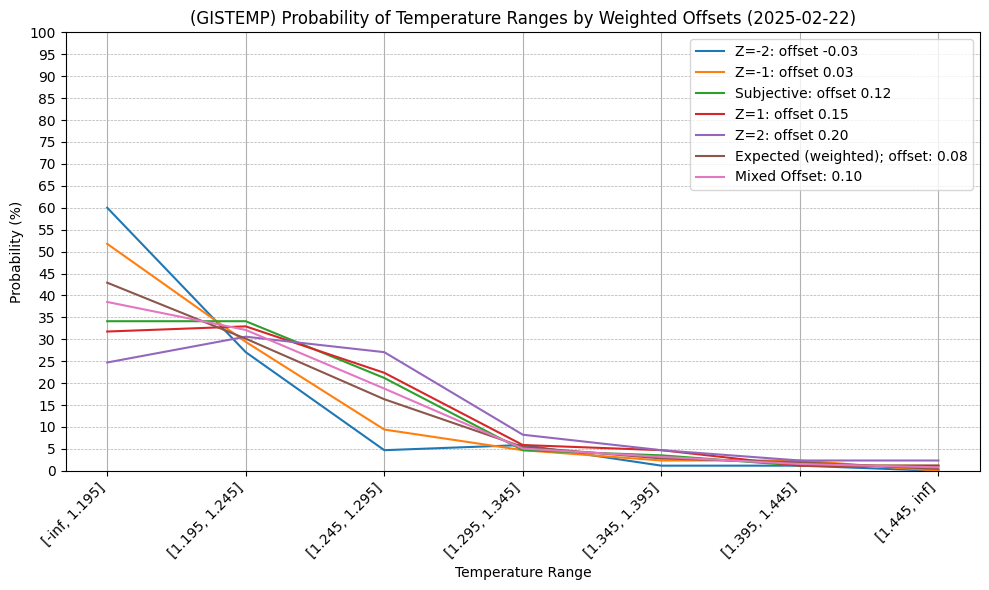

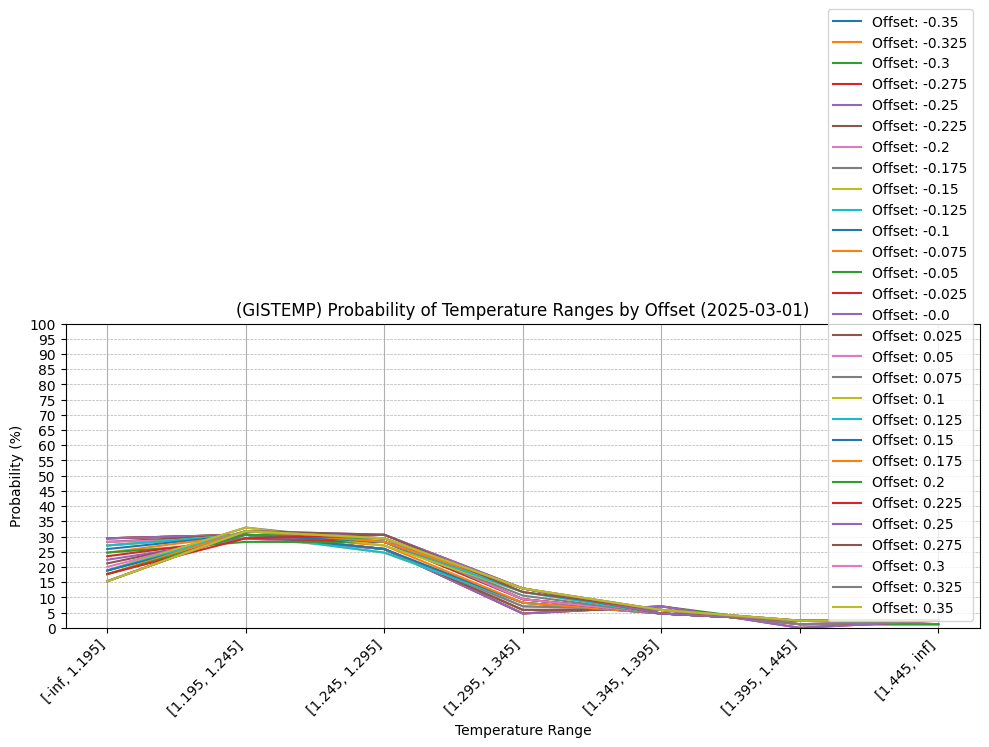

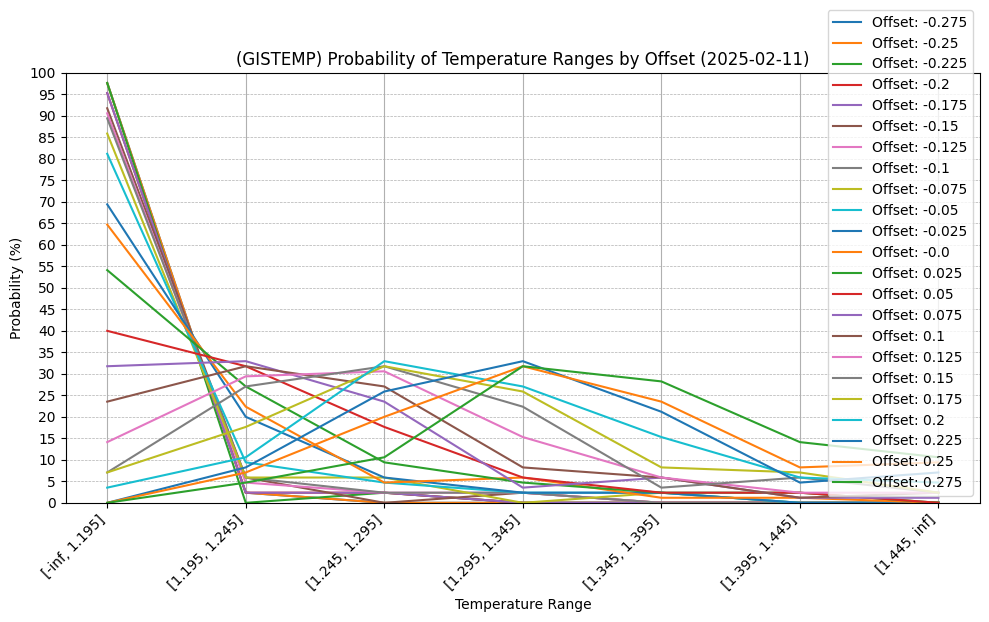

For future reference, 7 days out from end of month. (02/22/2025), the mixed offset prediction suggests the first bin still contains most of the probability mass:

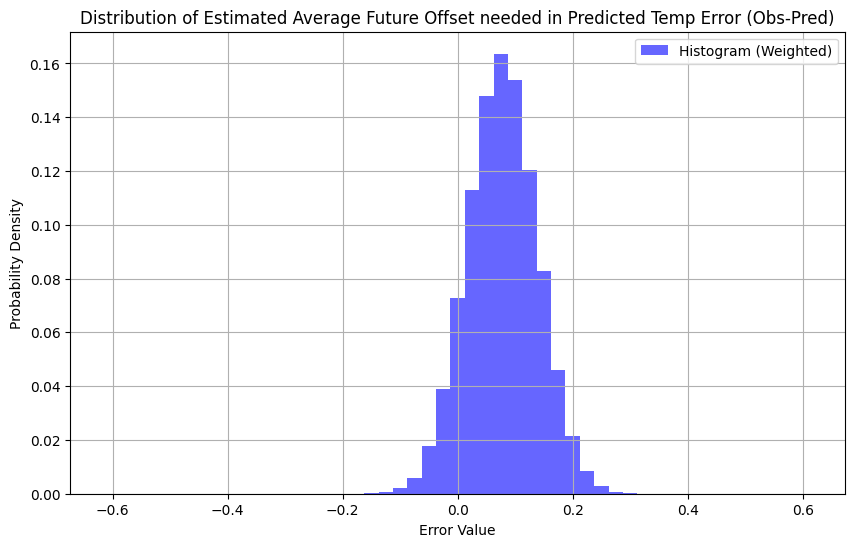

Peak of distribution actually falls in the 1.195-1.245 using offset of ~ 0.025 to 0.200, where right now the mixed offset is 0.100 (after a somewhat large error jump from yesterday, now OU = 0.08, where as yesterday it was ~0.03)

This mixed offset (0.100) corresponds to a point prediction that has a mismatch with the bin probabilities, it is a discretization artifact with the (weighted) probability distribution utilizing the (meta) prediction for the temps for the rest of the month (OU model offset) and the result mapped onto bins (this is caused from the question bin sizes being too small relative to the std. dev. so the peak of the distribution doesn't fall into the bin with the most probability mass)

All gistemp years included, offset 0.1, weight 0.1537099999999999

GIS TEMP anomaly projection (February 2024) (corrected, assuming -0.058 error, (absolute_corrected_era5: 13.262)):

1.218 C +-0.066The current dominant bin is actually <1.195 thus the mismatch and seeming statistical incoherence. Most of the probability mass using the OU model is indeed with the <1.195 bin despite the peak of the PDF being in a different bin.

For reference, the 0 offset prediction is 1.191 (which is the highest offset we get that has a prediction that falls under the <1.195 bin):

All gistemp years included, offset -0.0, weight 0.0729

GIS TEMP anomaly projection (February 2024) (corrected, assuming -0.057 error, (absolute_corrected_era5: 13.235)):

1.191 C +-0.066Edit: for reference comparison against polymarket:

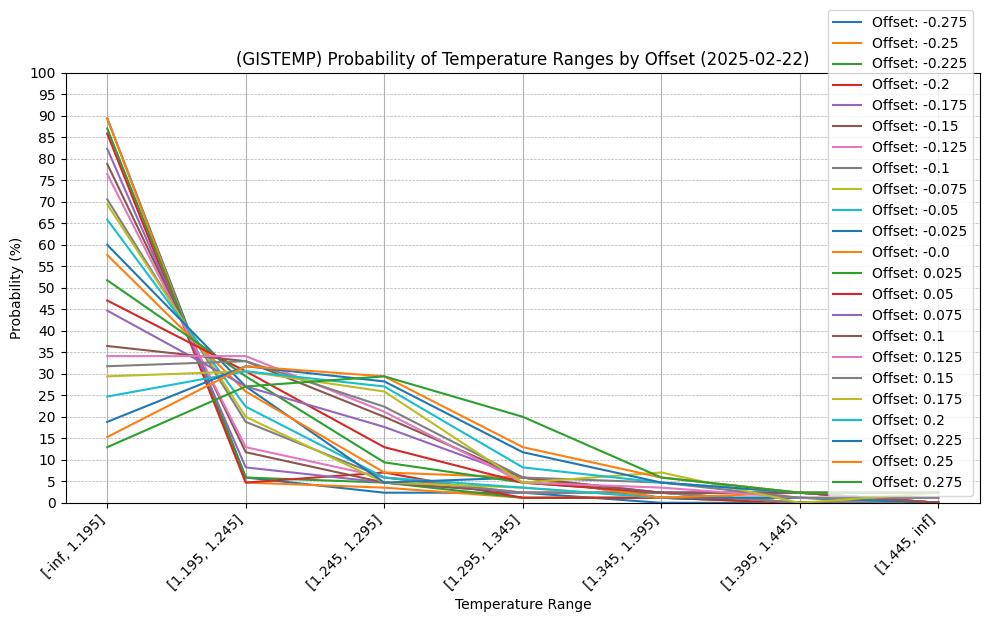

All offsets (not weighted by OU distribution probability weights)

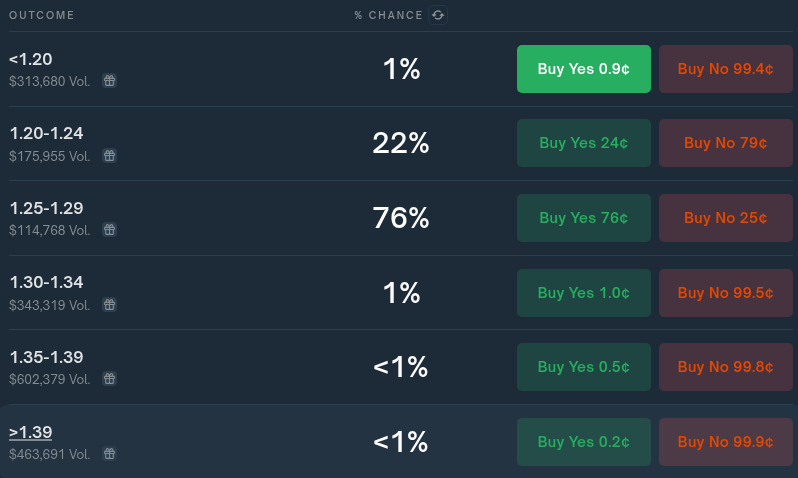

Polymarket has 77% under 1.25-1.29 (this corresponds to an offset range in my model where the peak of the point predictions is in [0.2, 0.375], with offsets of 0.275 or 0.300 producing point predictions close to 1.27 which is in the middle of the bin.):

All gistemp years included, offset 0.275, weight 0.0008

GIS TEMP anomaly projection (February 2024) (corrected, assuming -0.061 error, (absolute_corrected_era5: 13.309)):

1.265 C +-0.066

All gistemp years included, offset 0.3, weight 0.0001899999999999

GIS TEMP anomaly projection (February 2024) (corrected, assuming -0.062 error, (absolute_corrected_era5: 13.316)):

1.272 C +-0.066This correponds to the tail of the distribution of my OU model's chart (obs-pred: distribution, weights flipped to match necessary offset):

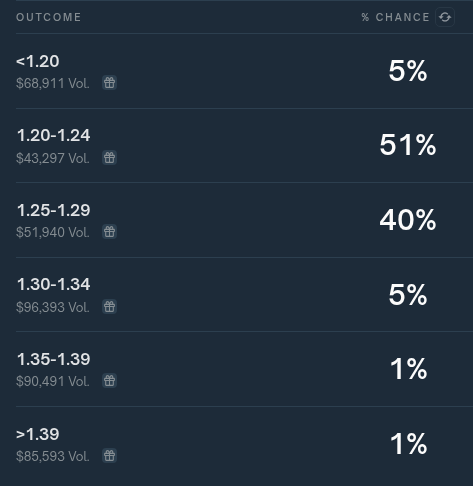

Polymarket probs 2/22 ~10 PM ET:

Edit 2:

As a further double check of the charting and weight code calculations, we check the manual output for the exceedance probabilities for the mixed offset and calculate the bins manually based off the exceedance point prediction probabilities alone:

==============================================================

All gistemp years included, offset 0.1, weight 0.1537099999999999

==============================================================

GIS TEMP anomaly projection (February 2024) (corrected, assuming -0.058 error, (absolute_corrected_era5: 13.262)):

1.218 C +-0.066

Calculations for % tying for given anomaly ranges:

Error to tie monthly exceedance of 1.195 C (for February 2025): -0.023

% of years for February that have error > than the error needed to tie for exceedance of 1.195 C February 2024

(% chance of (tying or) not breaking anomaly record for February): 63.53 %

Error to tie monthly exceedance of 1.245 C (for February 2025): 0.027

% of years for February that have error > than the error needed to tie for exceedance of 1.245 C February 2024

(% chance of (tying or) not breaking anomaly record for February): 30.59 %

Error to tie monthly exceedance of 1.295 C (for February 2025): 0.077

% of years for February that have error > than the error needed to tie for exceedance of 1.295 C February 2024

(% chance of (tying or) not breaking anomaly record for February): 10.59 %

Error to tie monthly exceedance of 1.345 C (for February 2025): 0.127

% of years for February that have error > than the error needed to tie for exceedance of 1.345 C February 2024

(% chance of (tying or) not breaking anomaly record for February): 4.71 %

Error to tie monthly exceedance of 1.395 C (for February 2025): 0.177

% of years for February that have error > than the error needed to tie for exceedance of 1.395 C February 2024

(% chance of (tying or) not breaking anomaly record for February): 2.35 %

Error to tie monthly exceedance of 1.445 C (for February 2025): 0.227

% of years for February that have error > than the error needed to tie for exceedance of 1.445 C February 2024

(% chance of (tying or) not breaking anomaly record for February): 0.00 %The first bin from the point predictions is ~36% (100-64%)

The second bin is ~33% (63-30%)

The third bin is ~20% (30-10%)

The fourth bin is ~6% (10-4%)

The fifth and sixth bin are ~2%

This is consistent with the weighted calculations done in the first chart.

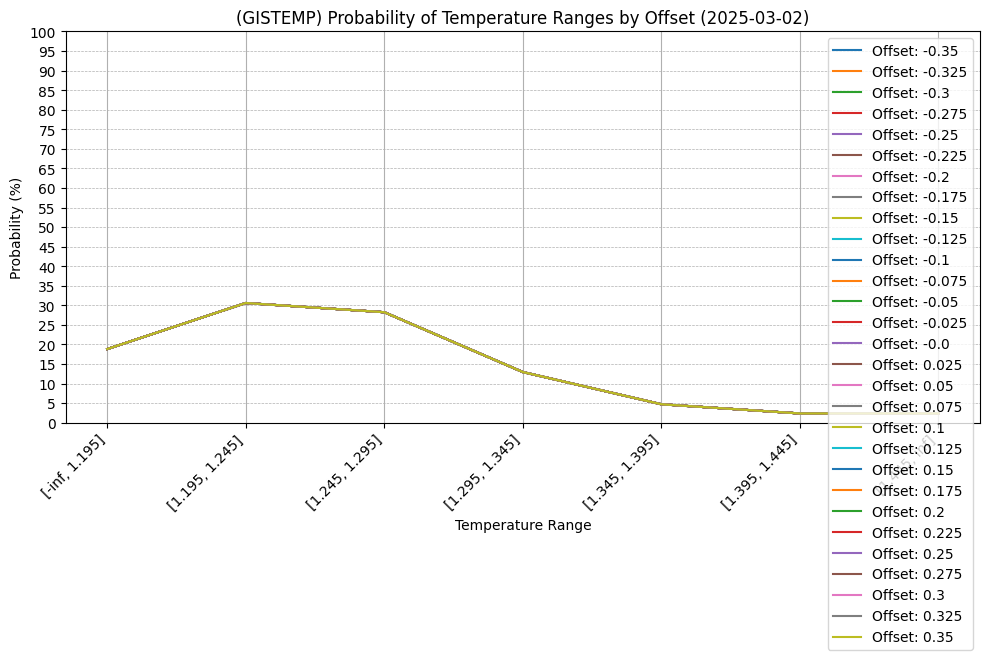

@LeonardoParaiso Still waiting on last day of data still from ERA5 but GEFS error was high for end of month and so most of the point predictions have shifted to falling in the third bin as the most likely, with the probability shifting to mostly the 2nd and 3rd bins.

Based on what I expect for the last day's temps, most of the point predictions are placed near the low end of the edge of the third bin though: ~1.25 or ~1.26 +- 0.66 depending on the final day's temps (with 1.26 being more likely due to rounding). In the error and exceedance probability model the 2nd bin and 3rd bin having roughly equal probability though despite this.

In the below chart though I am expecting an offset somewhere in the neighborhood of +0.2 to +0.3 based on recent trends (so the -0.1 to -0.3 set is not plausible to me)

As I've said, my model at the end of the month doesn't come anywhere nearly as sharp as polymarket which has 15% and 83% as the 2nd and 3rd bins currently, respectively. (The exception would have been if the point prediction was on a tail end of one of the open ended bins, i.e. in a case like earlier in the month where the end of the months temps were predicted to be quite a bit cooler, which they weren't.)

Final day of ERA5 data is in, looks closer to 1.25 now:

GIS TEMP anomaly projection (February 2024) (corrected, assuming -0.060 error, (absolute_corrected_era5: 13.296)):

1.252 C +-0.066The error model (simple historical and linear model) though has a distribution with 2nd bin with slightly more probability despite this (this can be interpreted in a number of ways: including that my model's distribution has a left tail that is fatter, or, counterfactually with a different subset of data (one that doesn't go all the way back to years with larger errors) it might be a sharper/narrower distribution and might include the 3rd bin more...)

For reference Polymarket has the 2nd bin at 22% and the` third bin at 76%, with all other bins around 1% or less. (The two bins probabilities correspond to a std. dev for a normal distr. approximately ~0.01 C)

For the bet today, based on this point in time and the past 7 months of final predictions of the above model's errors (calculated in an earlier comment below: https://manifold.markets/ChristopherRandles/global-average-temperature-february#2qxy88u7m78) it is likely that the std. dev. of +-0.066 is too wide (this is calculated from a validation set, which may include much older and data with much larger variance). A chi-squared test from the limited sample set of 7 implies the std.dev. should be between [0.0114, 0.039] with 95% confidence, or [0.0097, 0.0598] with 99.5% confidence.

If I just assume a normal distribution for the data, then the probs should fall between these ranges:

95% Confidence Interval for Standard Deviation: (0.0114, 0.0390)

Std. dev.: 0.0114

Bin Probability

<1.195 0.0000

1.195-1.245 0.2700

1.245-1.295 0.7299

1.295-1.345 0.0001

1.345-1.395 0.0000

1.395-1.445 0.0000

>1.445 0.0000

Std. dev.: 0.0390

Bin Probability

<1.195 0.0721

1.195-1.245 0.3567

1.245-1.295 0.4358

1.295-1.345 0.1267

1.345-1.395 0.0085

1.395-1.445 0.0001

>1.445 0.0000

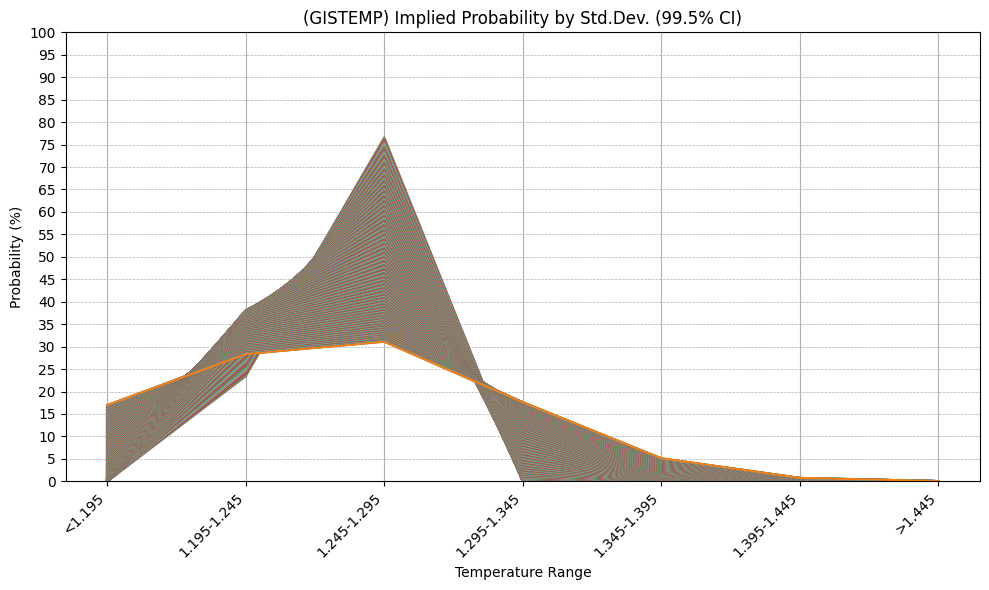

99.5% Confidence Interval for Standard Deviation: (0.0097, 0.0598)

Std. dev.: 0.0097

Bin Probability

<1.195 0.0000

1.195-1.245 0.2341

1.245-1.295 0.7659

1.295-1.345 0.0000

1.345-1.395 0.0000

1.395-1.445 0.0000

>1.445 0.0000

Std. dev.: 0.0598

Bin Probability

<1.195 0.1704

1.195-1.245 0.2830

1.245-1.295 0.3104

1.295-1.345 0.1761

1.345-1.395 0.0516

1.395-1.445 0.0078

>1.445 0.0006I produced a chart to show this more visually:

Before my bet tonight, I look at Manifold's current probabilities and whether they are in the implied probability ranges suggested by the past performance of the model (assuming a normal distribution):

The first bin is currently at 4%, is also in range (0-7%).

The second bin is currently at 29%, and is in range (~27-36%)

The third bin at 48%, and is in range (~44-73%).

The fourth bin is currently at 13%, which is at/or just out of range (0-13%).

The fifth bin is at 3%, which is out of range (0-1%) only in the 95%; in range the 99.5% CI.

And the sixth and seventh bin are also out of range (~0%) in the 99.5% CI.

Thus I think the 5th bin at 3% is still plausible.

The 6th and 7th bins are not plausible though, especially considering the original model I use has a fatter left tail, so I am selling my shares in those.

After this sale that changed the probabilites to 4, 30, 48, 13, 3, <1, <1%

Based on the charts the fourth bin at 13% looks likely to be too high at the moment, but I'm not extremely confident with this limited set of 7 samples, so I'll only sell some of my shares.

After this sale that changed the probabilites to 4, 31, 50, 11, 4, <1, <1%

Based on that the 2nd and 3rd bins now are very roughly in the middle of both the implied 95 and 99.5% distributions for the implied probabilities I think thats good enough.

Here are the probabilities again now after betting, of Manifold and Polymarket for future comparison:

Edit: I should have noted that Polymarket's probabilities are consistent with the (extreme edge) of implied probabilities now for the 1.252 point prediction but only at the lower edge of the 99.5% CI i.e. assuming a ~0.01 std. dev. which seems at it's face, actually intuitively implausible to me based on actual experience, but it does convey that there is a point prediction implied by it's probabilities that is consistent with my own.

I put up some decent sized bets early this time. Only expecting really at this point the anomaly to be less than the January 2025 anomaly, so the first four bins I weight roughly equally (fourth slightly less) and the last 3 much less roughly the same as well; I'll see if this rougher and more aggressive prediction pays out this time.

Included a wide range of offsets this time (for reference for confidence) and placed bets considered assuming meta-prediction that the rest of the month will need to be offset on the order of +0.1 to 0.2 (predicting GEFS-BC temps will continue under-predicting ERA5 temps in my model); this is a bit of a gamble but I do recall last month I was predicting on order of ~1.2C early in January and it ended up being ~1.36C, as the error for last 15 days has been under a bit with quite a few 0.1 to 0.15 points).

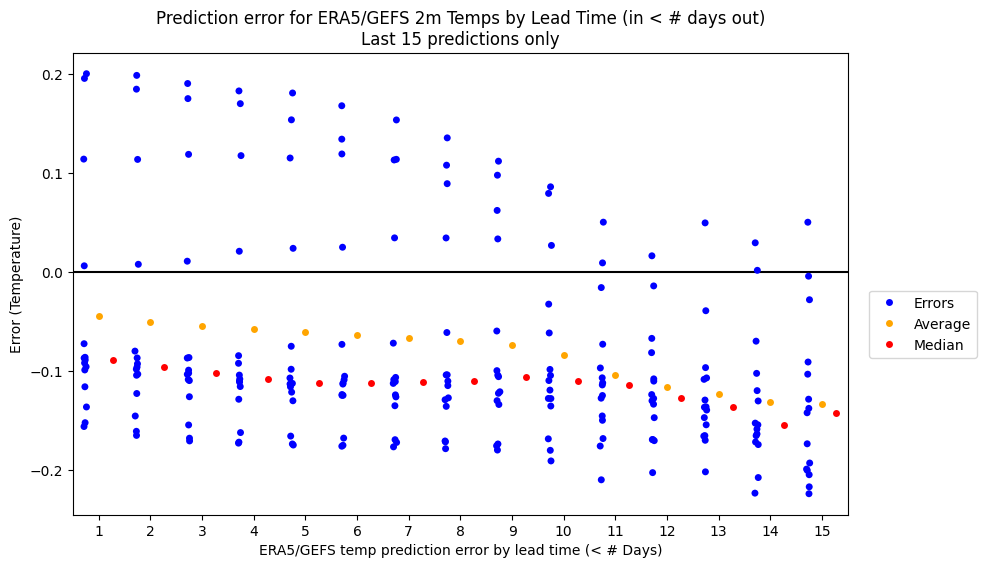

[Notes for self]:

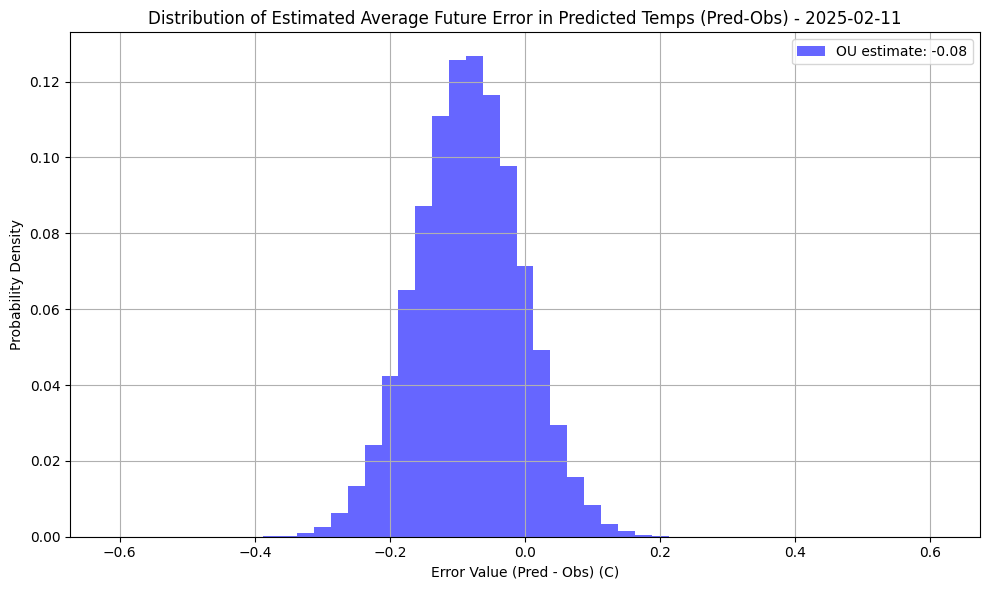

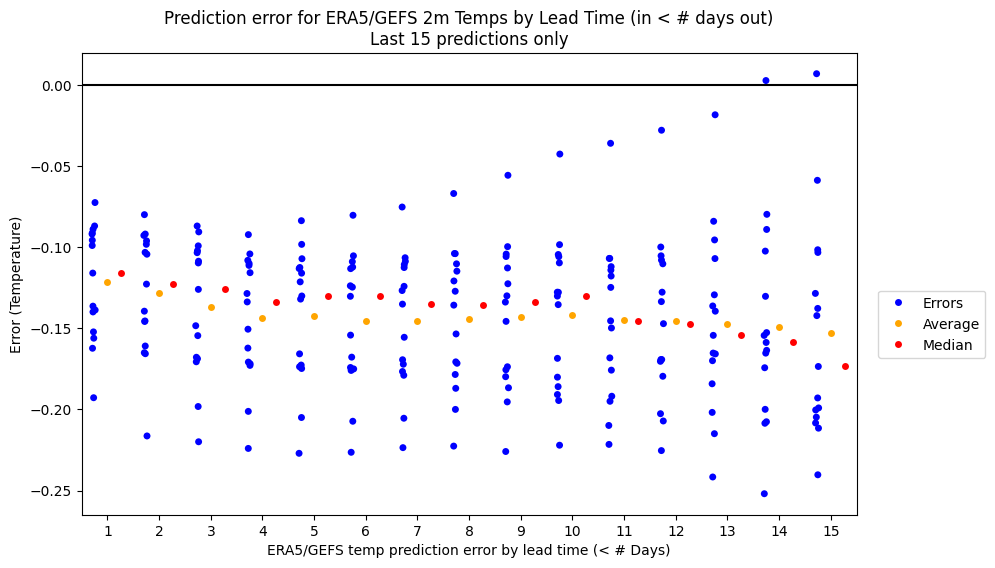

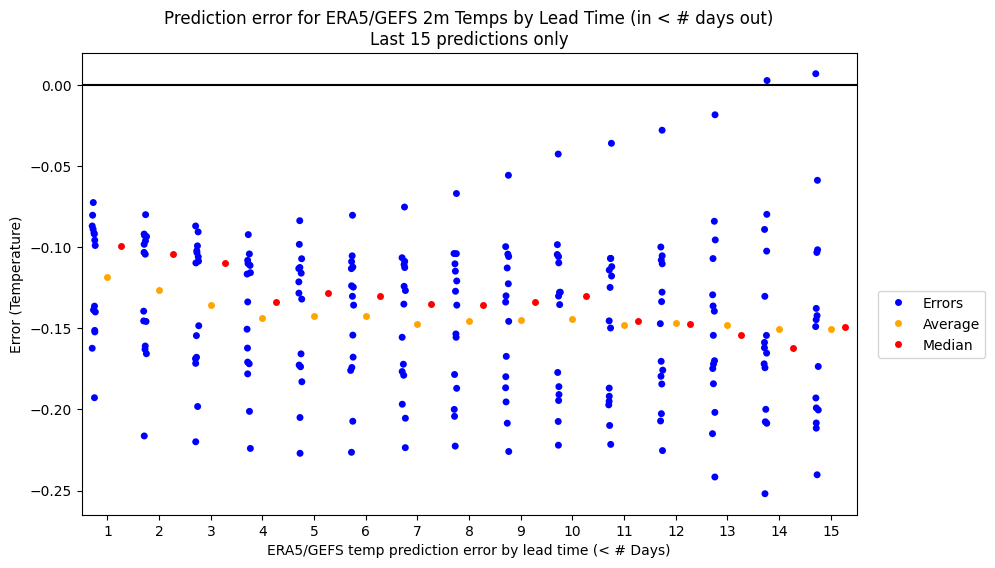

In order to make my meta-prediction estimates for what offset to use slightly more objective I now am experimenting with using an Ornstein-Uhlenbeck process with drift on the historical (1 day) lead time prediction errors (adjusted GEFS-BC - ERA5) I've collected since last June, since runs where the error drifts are common in my predictions.

As of 2025-02-11, theta, mu, sigma are [ 0.06768922 -0.00105138 0.04794998]

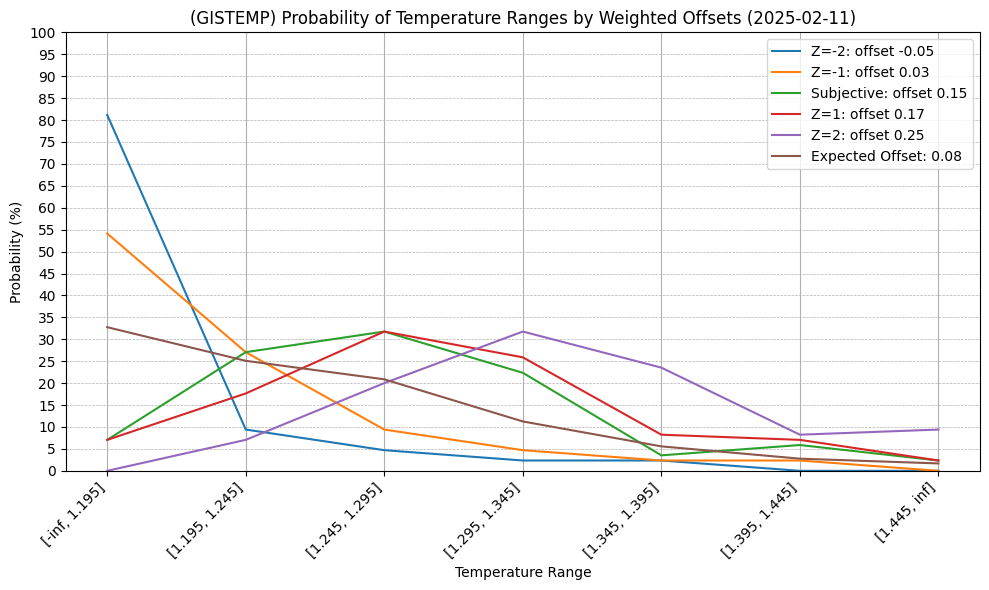

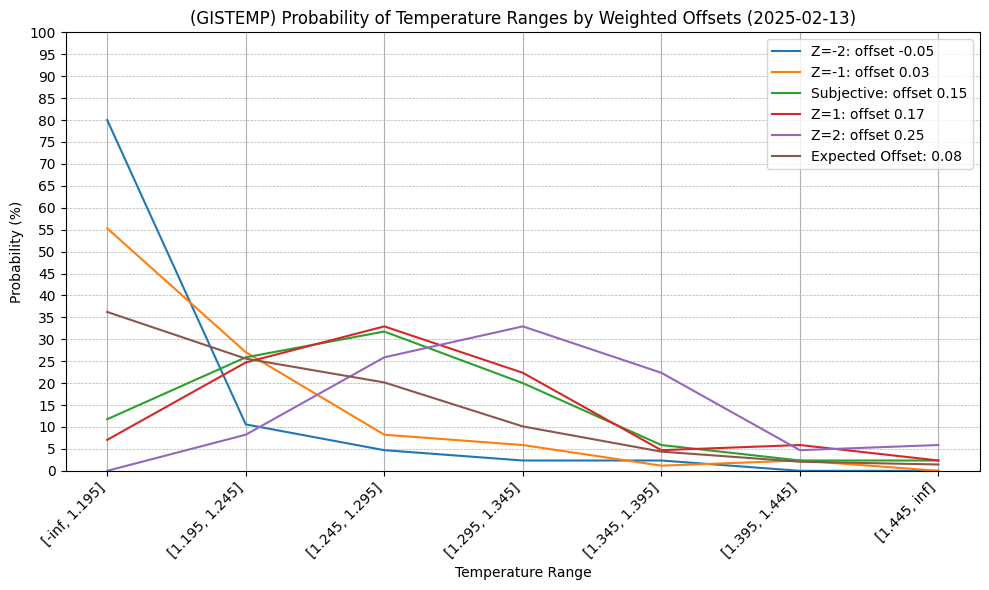

In order not to use a recent noisy, 1-day error as the input I take the maximum resulting average from a variable window size from the last 3-10 days of 1-day errors (this is all very ad-hoc) as a proxy. For instance, it gives an expected error of ~-0.08 in the latest run. I use that as an objective estimate for the offset (for the nearest 0.025 bin) of remaining days in February combined with a subjective offset (chosen +0.15) and take the average of the two probabilities.

It's fairly wide since there are nearly 3 weeks left of ERA5 data to fill in.

As such I also have added a modified chart that provides (indirect) estimates based on these simulations (using the nearest 0.025 estimates for the offsets). The Z-score probabilities are the Z=+-1, 2 for the offset distribution, not the actual distribution (which would be different from all these different ad-hoc methods I am combining) but I am taking them as an experimental proxy.

It predicts at least that mean reversion won't bring down the average error for the rest of the month all the way to 0 which makes intuitive sense given the recent error trend (which does seem to show a slight reversion), but this means there is still alot of uncertainty in the remainder of the month.

Unfortunately, it now makes much messier my earlier chart that just includes (some of the) offsets:

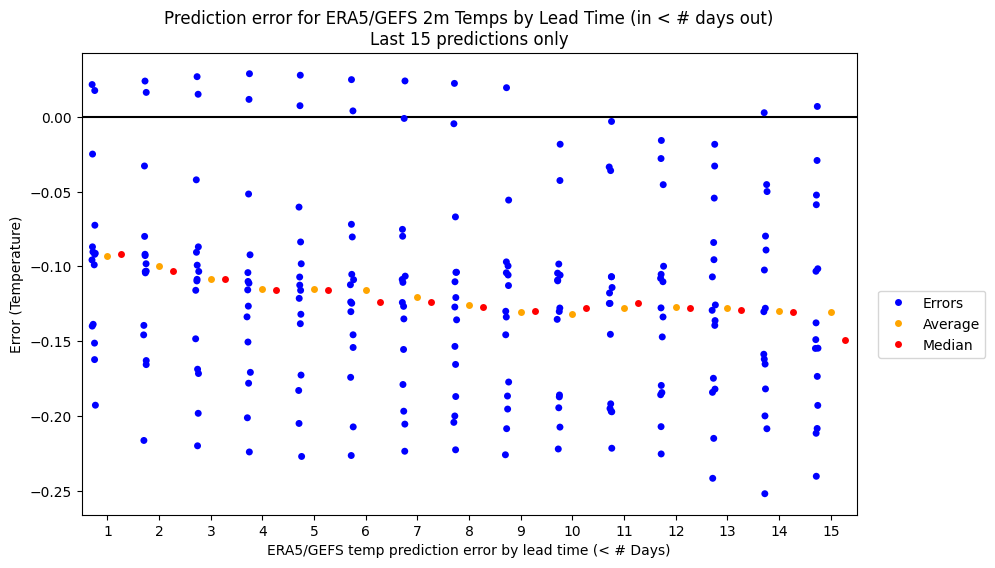

First day not requiring any extrapolation of GEFS data to fill in remainder month and now the first three bins look fairly even again after taking the average between objective/subjective estimates:

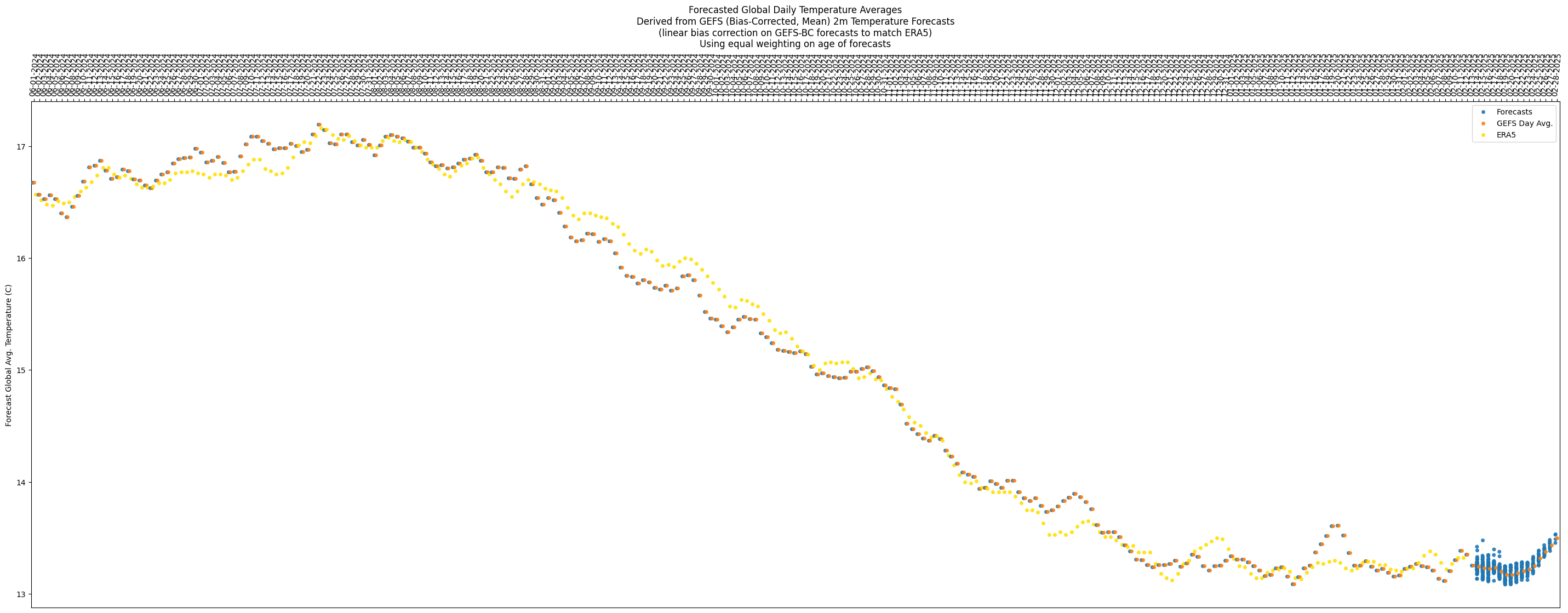

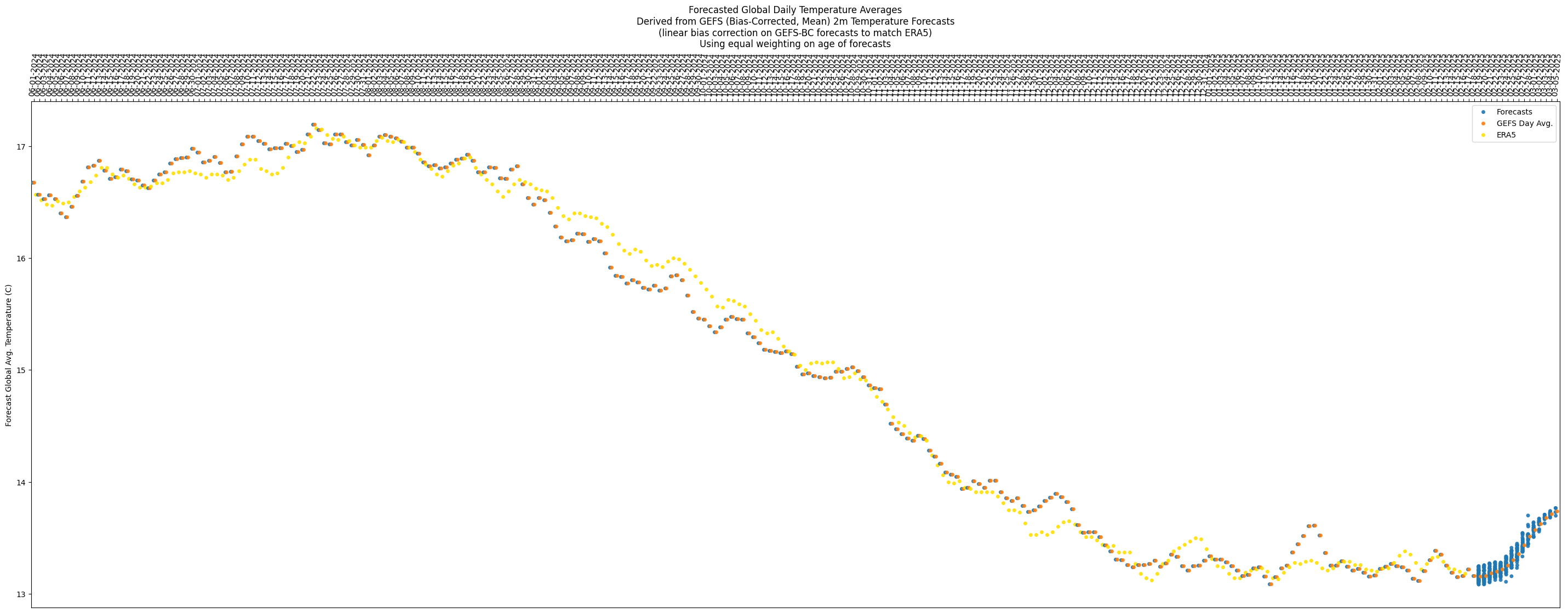

Temps look like it might jump up/down around unpredictably a bit for next week (looks like might not go up too much and stay relatively flat), and for the last week it looks like there is a steady rising trend:

Looks like the slow trend towards mean reversion of the (average) error is continuing:

If the first bin ends up being correct prediction, I'm going to have to start weighting the objective higher than the subjective next month, and vice-versa.

Last couple days of temps from ERA5 have caused the error in the GEFS-ERA5 predictions to revert to near the mean (on the opposite sign too) and I've adjusted my subjective estimate slightly downwards accordingly for the rest of the month as this is a tiny bit suprising:

Now, roughly the first two bins look most likely at the moment, but with the size of the other bins being narrower, it means the second bin gets significantly less probability individually. Still plenty of days left for this to shift significantly.

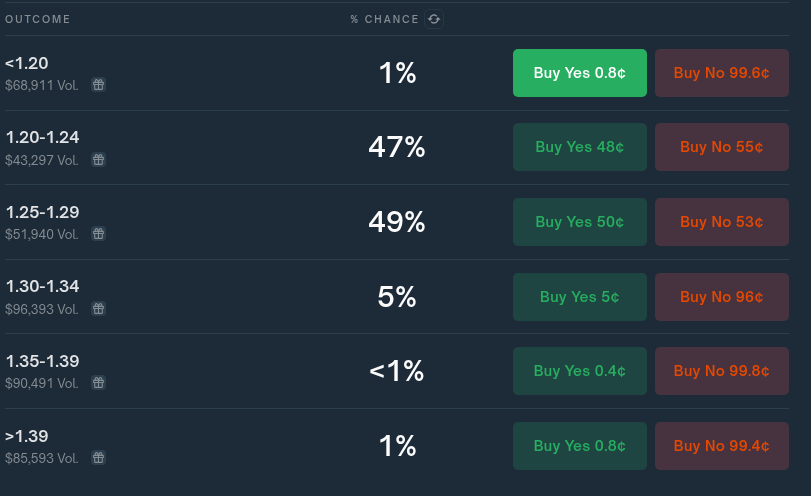

Comparing with polymarket, this time the bins do mostly match, at least the relevant ones do (the last two bins in this question are squashed into the last bin for polymarket):

Per polymarket, not sure what is with the high percents in only two bins (90% of the probability allocated with more than 10+ days left), and further still perplexed why the first bin (<1.20) has such a low % compared to the third bin (1.25-1.29).

Switched to using my own (unrounded) output from gistemp for the gistemp data as the input to the model rather than using the official rounded data for slightly better accuracy (in comparing yesterday's and today's run with the different datasets it only seems to shift the bins 2-3% -- might be marginally worth it).

Comparison of run today with different gistemp data sets:

(official rounded)

(unofficial unrounded)

Polymarket continues to be way overconfident IMO. First bin looks extremely cheap, and third bin likely overpriced:

@LeonardoParaiso I wish i knew. Speculatively, maybe because the first half of the month was relatively warm or January was warmer than expected so February might be as well (this is a prior I started off with as well), or maybe they are using different forecast data (I'm using GEFS-BC as a starting point); I don't know what a ECMWF forecast looks like for the rest of the month. If they are using entirely observations and no forecasts this might explain it but I doubt that it is so simple a story...

The latest error for my model right now though has been reverting towards 0 (and if anything the predictions slightly warmer than observations for the last few days) which implies that prior looks wrong at the moment.

Forecast does show this plataeu ending before the end of the month though with not too much spread in the rise:

Without the major holders posting their methodology I can only speculate wildly on their methodologies. Right now to me from my objective estimates (OU) though it looks nearer to 1.18 or 1.19, rather than 1.26 or 1.27 which is what Polymarket's probabilities seem to imply.

In reality this is only a bit more than ~ 1 std. dev from my own guesses, but is also not too far from the data uncertainty itself so it's difficult to find any fault in the underlying meaning behind these probabilities only with the methodologies themselves.

@parhizj Yes observations look like 2nd warmest Feb so far which puts it in 1.36 to 1.43 range but it will have to warm a lot quickly to avoid dropping below 2nd warmest. 3rd warmest Feb was +1.24C; could easily end up below that.

Often warms from Jan to Feb and Jan at 1.36. La Nina makes drop more likely and 2021 did drop 0.17C so if there was 0.17C drop this year that would be 1.19. Maybe they think beating that drop is unlikely due to stronger La Nina developing late 2020.

Not sure I would want to rely on above to say temperature anomaly won't drop much. Particularly if unusual unexpected warmth is due to prolonged El Nino effects that might disappear after a couple of months with Nino 3.4 below -0.6.

Polymarket traders may have better methods or could be wrong/worse and overconfident. Takes some time to gather data to tell.

@LeonardoParaiso First or second bin is still my guess.First bin is marginally likely now though (assumes prediction error on remainder of month temps is on average is fairly close to zero (OU producing errors of -0.02 to -0.03 for last few days)).

@parhizj While polymarket has increased odds for 1.25 to 1.29 to 70%. How is your performance when going against polymarket consensus?

SST getting surprisingly high again despite La Nina?

https://climatereanalyzer.org/clim/sst_daily/?dm_id=world2

@ChristopherRandles No idea. There are at least three distinct methodologies applied to this market based on timing. (Before end of month, 2-7 days after end of month when we have ghcnm but not ersst, and after we have both ersst and multiple ghcnm).

Part of the problem is the major bettors might change in poly markets over time that have better methods or their incentives might change depending on market probs. It would be good to check 7 days prior to each month and compare to see what’s going on. I’ll try to do it later tonight.

Prior to end of month I think I might be better if I had to guess.

But aenews and some others might be better after month is over sometimes (latter two cases), and I have noteworthy recollections of failing sometimes at guessing which “run” (which ghcnm set) they end up using for the official data .

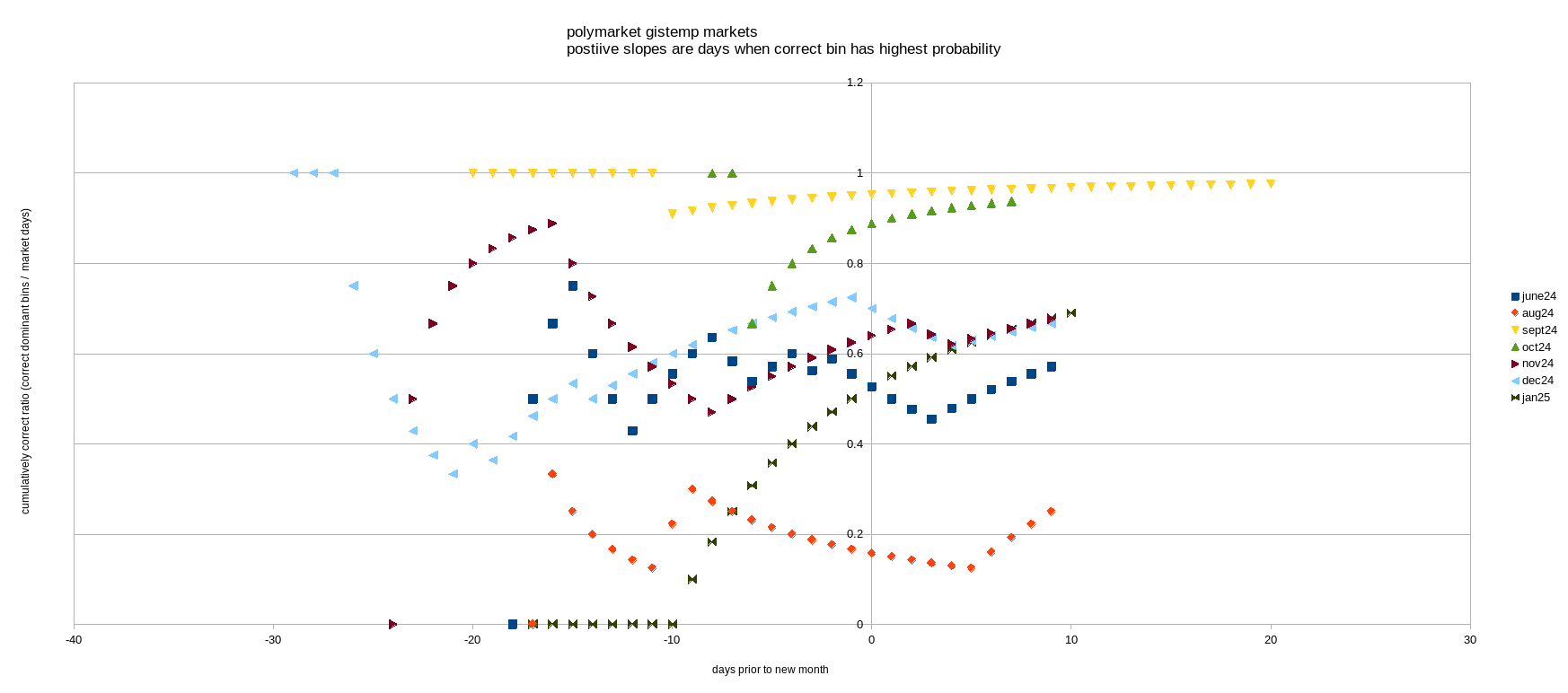

Looked at Last 7 markets on poly.

For your specific question about "consensus" in none of the polymarkets is a question at 70%, 7 days before the end of the month.

Highest is roughly 55% (maybe peaking at 60% around then) a few times. Thus I think my point about it being overconfident in a single bin I think is still warranted despite the below analysis, especially since the bin isn't a wide lower/upper bin (at the very least I can say it is abnormally high even for its own markets).

The below chart is kind of unique, but from the positive slope you can identify when the correct bin is dominant in the market. For instance for Oct'24, on the 6th last day of Oct (Oct 26) was the only day where the dominant bin wasn't correct (all other days 1.29-1.34 was dominant).

5/7 polymarkets had the correct bin for most of the week before the month concluded (a couple flip the other way afterwards temporarily). (No July Poly)

Keep in mind many of these markets are very much apples and oranges in terms of direct comparisons between polymarkets to manifold markets as the bins are almost always different, but the record overall for manifold isn't good for the week prior. For instance three of polymarket's bins just have widths > 0.5 (June has 0.7 wide bins. Sept, Oct has 0.6 wide bins).

Subjective judgment last week of month:

Polymarket: 5/7 Pass

Manifold: 2/8 Pass (Eep! 😭 )

Polymarket markets with bins consistently correct dominant bin ~week prior:

2024

June Fail

July MISSING

Aug Fail

Sept Pass

Oct Pass

Nov Pass

Dec Pass

2025

Jan Pass

Manifold markets with bins consistently correct dominant bin ~week prior:

2024

June Fail

July Fail

Aug Pass

Sept Fail

Oct Fail

Nov Fail

Dec Fail

2025

Jan Pass

Before you conclude wow Polymarket is so much better, you should also pay attention to the few days AFTER the month is finished where a couple of those 5/7 "Passes" have the wrong bin dominant for a couple days.

Then I just looked at the last predictions I posted in the comments by date that weren't based off of GISTEMP calculations (modeling from mostly ERA5 data):

My temp predictions with all or almost all of month data in:

Month (Pred - Obs) (data from approx +X days relative to next month's 1st day)

June24 +0.05 (+1) (i.e. July 2)

July -0.02 (+1) (From July+ I modify the model: ERA5 debiasing by month when possible using a linear model, year otherwise)

Aug +0.01 (+0)

Sept -0.03 (-1)

Aug -0.01 (+3)

Nov -0.02 (-1)

Dec +0.03 (+1)

Jan25 0.0 (-3)

For July onwards my error is very low in absolute terms once the final ERA5 data is in:

Average error July+ is: -0.006

std dev is ~ 0.021).

You can see that even for a prediction at the end of the month with the std dev almost half the width of the manifold bins (0.05 wide bins) it is only when I am exceedingly lucky the middle or point prediction ends up in the center of the bin that I should be extremely confident of which bin it will end up in (I have probably not bet as well as I could have with regards to this in the past); slightly away from that center and it becomes more difficult.

As for predicting the temps ~7 days out which is even further challenging, this month I'm using an OU model to hopefully make my metapredictions better for the remainder of the month but the std. dev is still large for the remaining temps (~0.05 C), but at least now I hope its slightly better quantified rather than just guessing purely subjectively based on a recent run.

@parhizj Lmao the manifold stats 😂

Seeing the mess that happened after the months of december and november ended, i'm less inclined to believe poly traders.

@LeonardoParaiso To be self serving I don't think it's like giving an F grade in school so it may look a lot worse than it actually is. As they are probabilities it just means you can't clamp to CORRECT/INCORRECT based on the dominant probabilities; I just did this as a rough measure.

I think it more accurately reflects that 0.5 bins are actually too narrow for that type of correct/incorrect assertion for that phase of prediction (meaning prior to actual GISTEMP runs days before the data is released 0.05 wide bins are too narrow to reach very high probabilities like 70%+; doing so I think is overconfidence -- the exceptions in these markets are when the bins are the one-sided bins that are much wider than 0.05 and the data supports it.

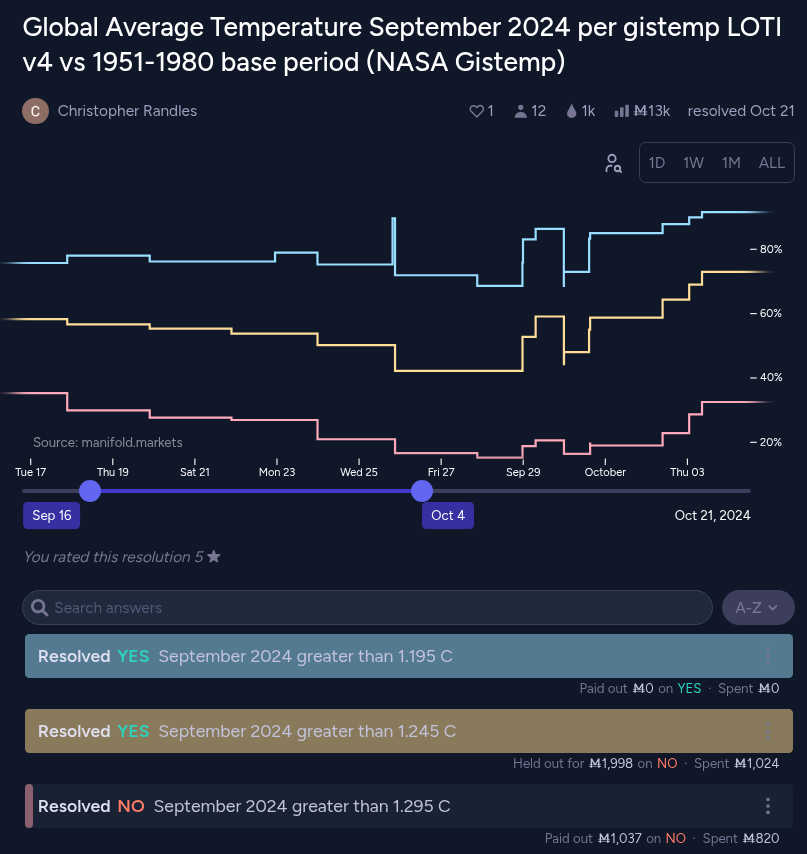

https://manifold.markets/ChristopherRandles/global-average-temperature-septembe

I.e. here is a market where the probabilties I demonstrate the issue.

For instance aenews did post one of his predictions (https://manifold.markets/ChristopherRandles/global-average-temperature-septembe#eas6xyjqevw) shortly after the month ends as 1.27 +- 0.06 (this was very close to the actual result 1.26, an error of +0.01). I posted a prediction of 1.23 +- 0.075 (an error of -0.03 ).

Converting to closed sided 'bins', for the end of the month the dominant bin flips between 1.25-1.29 and 1.20-1.24 (the dominant being the thickest space between the two lines) but not by a huge amount as you can see the thickness between the blue-yellow and yellow-red lines are not too disparate (this reflects the narrowness of the bin); a big caveat though is only 2 of the 3 relevant bins are double sided (so the < 1.20 bin reached 31% at one point but not dominant; this bin being one-sided explains the large value).

Based on my own estimates I might have have disagreed with about >1.245 being bet up to 73% as a one sided bin based on my own prediction, but as a double sided bin (1.25-1.29) it only gets as high as ~40% despite nearly being in the middle of the 1.25-1.29 range. I made a much larger prediction mistake a few weeks after the month finished, unrelated to this actual model (betting on what dataset/run the people at NASA would use after the hurricane and worse, being overconfident as such).

In this respect I think the double-sided bin questions that are exclusive (that @ChristopherRandles made later on starting in November) that matches polymarket type better reveals any overfidence (compared to the single sided multi) and the uncertainty that should be in these narrow bins. I.e. in November none of the bins exceeds 50% prior to the GISTEMP runs (after the gistemp data became available is a different matter).